At Inspera, we believe in Accessibility by Design.

Usability is about making software that is easy to use. Accessibility is about making it easy to use by everyone.

One of the many benefits of digital transformation in education is making the student experience fairer for all learners. We’re already seeing a greater focus on equity and inclusion in higher education. How might a fairer assessment experience manifest?

For example, imagine on exam day that all learners submit their answers on screen in an online assessment rather than on paper. The answers of those who have permission to type due to disability or a medical condition would then be indistinguishable in both delivery and marking.

What then happens is that more learners can take exams alongside their peers, and that is what inclusion is all about.

In the example, not only do the students with accommodations enjoy inclusion, but those that are handwriting challenged also benefit from the typed digital experience.

Gone is the stigma of “special” treatment that is so often vocalized by both affected learners and their educators. By removing such barriers between learners, the experience of education is radically changed, for the better.

What Does Accessible Digital and Online Assessment Mean?

When we talk about accessibility in assessment, we are referring to the design and implementation of an assessment. This includes the features available, the experience, and how inclusive it is for those with diverse needs or disabilities.

In short, an accessible digital assessment is one that can be accessed by all individuals, equitably. This means all students can take part, regardless of whether they have any physical, cognitive, or sensory limitations.

What is the Difference Between Accessible Assessment and Inclusive Assessment in a Digital Context?

The terms ‘accessible assessment’ and ‘inclusive assessment’ sometimes get used interchangeably. The two concepts definitely go hand-in-hand in achieving this. However, there is a subtle distinction that we should make between them.

Accessible assessment focuses on the way in which an digital or online assessment is formulated and delivered. It concentrates on removing roadblocks for students who might otherwise struggle with online assessment.

Inclusive assessment concentrates on welcoming students from diverse backgrounds and encompassing all learning abilities.

While accessibility and inclusivity are both about making assessments fair and equitable, inclusivity has a broader remit that affects all learners.

For example, inclusive assessment in higher education considers factors relevant to all learners, such as language proficiency and socio-cultural contexts. The latter could come in many forms, such as family attitude to education or economic background. These factors affect everyone.

There is also the underlying learning environment that students experience, and how accessible and inclusive this is and how it impacts their assessment performance. Professor Phillip Dawson, from Deakin University Australia, addresses this issue of equitable campus experiences in our white paper Balancing assessment security and flexibility in e-assessment.

Key Elements of Accessible and Inclusive Digital and Online Assessment

So what does accessible and inclusive assessment mean in practical terms? When it comes to setting an online assessment, accessibility and inclusivity will factor in many aspects. It’s not just the capabilities of the platform itself but also the way in which the assessment is designed and the circumstances and conditions under which the exam takes place.

Here are the main factors to consider for running an accessible and inclusive online assessment:

- Accessible functionality: When deciding on your online assessment platform, be sure to have a look at its accessibility functions and features. Some of these features may come out-of-the-box, and some will be dependent on the educator implementing them when designing their assessment. For example, your chosen platform should support screen-readers. Those with visual, hearing, motor, or cognitive impairments should also be taken into account in the design process by including alternative text for images, closed captions on videos, keyboard navigation, and adjustable font sizes and colors.

- Feedback and transparency: Assessment is not a static process. Feedback both to and from students is a vital way to ensure that the diverse needs of learners are being met. This will also help refine the assessment process, ensuring that it is measuring and meeting its goals. With a digital assessment platform, you can embed text and audio feedback in student submissions rather than using multiple platforms to deliver feedback. This enhances feedback to students, improves the experience as they can access it in one place and gives educators greater flexibility in delivering rich feedback.

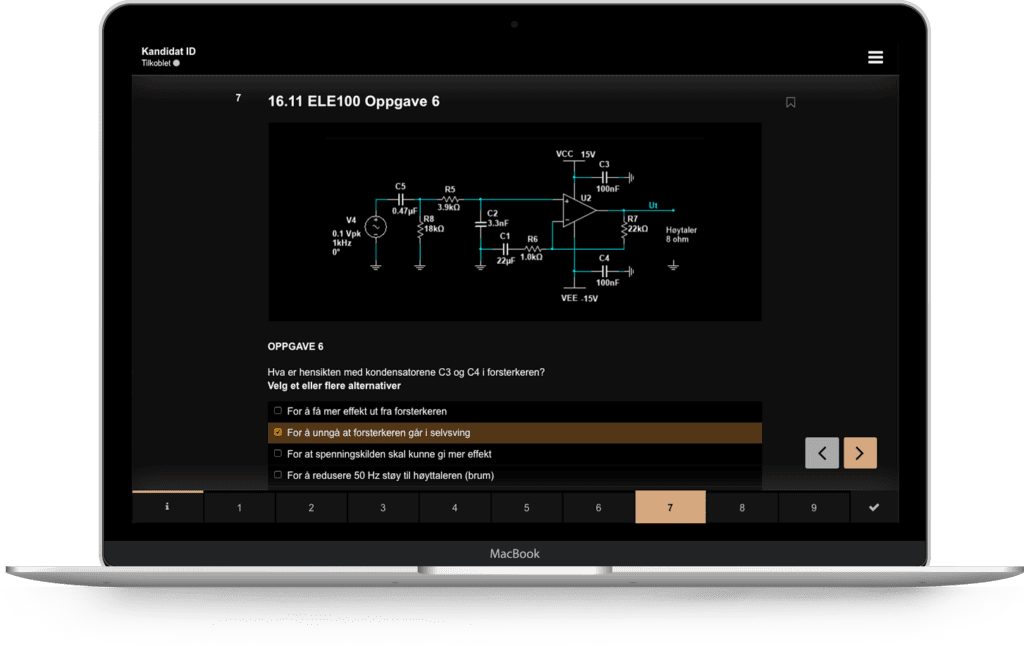

- Flexible question types: Accessibility and inclusivity can be enhanced by using a variety of question types and acceptable answers. For instance, using both multiple-choice questions and open-ended questions can effectively measure students with different learning abilities. In a digital environment, it’s simple to allow candidates to choose which question to answer where there is flexibility to offer them a choice. The same argument can be made for allowing a variety of different ways to answer a question. Response types that range from voice recordings to choosing answers from a list or simple text input offer wider accessibility opportunities for students.

- Clear and intuitive navigation: Accessibility by design extends to how clear and concise the instructions that faculty include are. Similarly, it’s important that all students are able to easily progress through the online assessment process without confusion. This means the interface needs to be user friendly, and the navigation should be intuitive.

- Device compatibility: One of the key trends in online assessment accessibility is the BYOD (Bring Your Own Device) style of exams. This allows students to access their assessment using their own technology. This means that the platform and assessment design needs to be compatible with various types of operating systems and devices.

- Special considerations: As with in-person conventional assessments, online assessments also need to cater to students that need specific accommodations. This can include allowing some students extra time, or to take breaks during the exam.

- Consider your language: As you will know from traditional assessment practice, to be inclusive, it’s important to consider the language barriers that might affect students of differing nationalities and non-native speakers. So the use of slang or jargon should be avoided in digital assessments too. Question and answer translations could be provided where possible.

- Avoid cultural bias: To be truly inclusive, assessments need to ensure that they contain no culturally biased content or questions that could alienate, confuse or offend students. The language and wording of assessments should always be carefully reviewed to ensure that it is free from stereotyping or using culturally insensitive or discriminatory language. The same is true in digital assessments. Collaborating with your team within a platform can help to identify cultural bias.

Inspera and Web Content Accessibility Guidelines

Universal access is critical. It is our mission to make educational assessment more inclusive, fair, and relevant to the 21st century.

We have an ongoing commitment to Accessibility by Design, but achieving universal access compliance standards is just the foundation.

Our UI/UX researchers and designers continuously innovate to deliver assessments for all. New functionalities such as individual access arrangements are just a start.

For us, accessibility and universal access is a matter of mindset. It is not only about making our product accessible to all; it is also about making it better for everyone. Brent Mundy, Chief Product Officer

When planning digital transformation as an education provider, the adoption rates among learners and staff are one of the main concerns. This is an area where accessibility by design is sure to make an important impact. To ensure our solution adapts to the diverse and evolving user needs in learning inclusion, we are actively striving to meet all the necessary requirements and regulations in relation to the Web Content Accessibility Guidelines (WCAG) technical standards.

For US users, we are working to improve accessibility for all, including those with disabilities, to ensure compliance with the Americans with Disabilities Act (ADA). Additional to the WCAG standards mentioned above, we also follow Section 508 to enhance inclusivity and usability. We invite feedback from users as we continue to make necessary adjustments for a better user experience.

The four basic principles of WCAG are: perceivable, operable, understandable, and robust. For Inspera, these are reflected in the online environment where learners are submitting their exams.

As part of working to meet the WCAG code standards, Inspera has implemented an accessibility test routine. This consists of following the semantic markup standards, checking keyboard accessibility, testing various operating systems’ screen readers, and using development dependencies to notify possible accessibility issues.

Inspera’s Online Assessment Accessibility

Technology is a powerful sidekick. While we ensure that Inspera meets WCAG standards, there are still many ways that educators can help create an accessible and inclusive environment for their learners using Inspera Assessment. Here are a few of them:

- Text-to-speech is an aid that allows learners to have text-based content read out loud. The learner can select any text on the page and then, by clicking the play button, can hear the text read out loud. The voice will read the text in the same language the learner has selected as the interface language. We support 24 languages, including the American and British forms of English, as well as many European, Nordic and Asian languages.

- High contrast might improve readability for users with visual impairments. This feature is activated by the learner in their options menu.

- By default, font sizes, line spacing, and spacing between questions are designed to maintain the readability of the information presented on the available screen space. In addition, the learner has the option to set the root font size to small, medium, or large.

- Learners with dyslexia might have a formal right to use a spell-checker in an assessment. This aid is available for essay questions in two variations: ordinary or as-you-type spell-checker.

- Semantic markup is the foundation of making the web accessible. Inspera Assessment supports this standard, resulting in a range of features, including screen reader, keyboard navigation, braille keyboards, and zooming. Read more about it here.

- Accessibility by design is more than technical WCAG compliance. We need to also cater to individual access arrangements:

- Enable/disable spell-checker;

- Allocate extra time before or during the examination;

- Pause and resume lock-down browser (i.e. Inspera Safe Exam Browser);

- Add attachments to the learner’s submission. For example, if the learner is exempted from using Inspera Assessment, and has to use another tool to create their answer.

Learn More

Do you want to learn more about how to conduct online exams and assessments? Or how Inspera Assessment can make your assessments accessible, secure, valid, and reliable? You’re in luck – we wrote a “Guide to Online Exams and Assessments”.

Alternatively, you can contact us about your digital assessment goals or to book a demo.